Editor’s note: The DES second data release will be featured at the meeting of the American Astronomical Society. The session “NOIRLab’s Data Services: A Practical Demo Built on Science with DES DR2” takes place on Thursday, Jan. 14, 3:10-4:40 p.m. Central time. The session “Dark Energy Survey: New Results and Public Data Release 2” takes place on Friday, Jan. 15, 11 a.m.-12:30 p.m. Central time.

The Dark Energy Survey, a global collaboration including the Department of Energy’s Fermi National Accelerator Laboratory, the National Center for Supercomputing Applications, and the National Science Foundation’s NOIRLab, has released DR2, the second data release in the survey’s seven-year history. DR2 is the topic of sessions today and tomorrow at the 237th Meeting of the American Astronomical Society, which is being held virtually.

The second data release from the Dark Energy Survey, or DES, is the culmination of over a half-decade of astronomical data collection and analysis with the ultimate goal of understanding the accelerating expansion of the universe and the phenomenon of dark energy, which is thought to be responsible for this accelerated expansion. It is one of the largest astronomical catalogs released to date.

Including a catalog of nearly 700 million astronomical objects, DR2 builds on the 400 million objects cataloged with the survey’s prior data release, or DR1, and also improves on it by refining calibration techniques, which, with the deeper combined images of DR2, lead to improved estimates of the amount and distribution of matter in the universe.

Astronomical researchers around the world can access these unprecedented data and mine them to make new discoveries about the universe, complementary to the studies being carried out by the Dark Energy Survey collaboration. The full data release is online and available to the public to explore and gain their own insights as well.

Shown here is the elliptical galaxy NGC 474 with star shells. Elliptical galaxies are characterized by their relatively smooth appearance as compared with spiral galaxies, one of which is to the left of NGC 474, which is oriented with South to the top and West to the left. The colorful neighboring spiral (NGC 470) has characteristic flocculent structure interwoven with dust lanes and spiral arms. NGC 474 is at a distance of about 31 megaparsecs (100 million light-years) from the sun in the constellation of Pisces. The region surrounding NGC 474 shows unusual structures characterized as ‘tidal tails’ or ‘shells of stars’ made up of hundreds of millions of stars. These features are likely due to recent (within the last billion years) mergers of smaller galaxies into the main body of NGC 474 or close passages of nearby galaxies, such as the NGC 470 spiral. For coordinate information, visit the NOIRLab webpage for this photo. Photo: DES/NOIRLab/NSF/AURA. Acknowledgments: Image processing: DES, Jen Miller (Gemini Observatory/NSF’s NOIRLab), Travis Rector (University of Alaska Anchorage), Mahdi Zamani & Davide de Martin. Image curation: Erin Sheldon, Brookhaven National Laboratory

DES was designed to map hundreds of millions of galaxies and to discover thousands of supernovae in order to measure the history of cosmic expansion and the growth of large-scale structure in the universe, both of which reflect the nature and amount of dark energy in the universe. DES has produced the largest and most accurate dark matter map from galaxy weak lensing to date, as well as a new map, three times larger, that will be released in the near future.

One early result relates to the construction of a catalog of a type of pulsating star known as “RR Lyrae,” which tells scientists about the region of outer space beyond the edge of our Milky Way. In this area nearly devoid of stars, the motion of the RR Lyrae hints at the presence of an enormous “halo” of invisible dark matter, which may provide clues on how our galaxy was assembled over the last 12 billion years. In another result, DES scientists used the extensive DR2 galaxy catalog, along with data from the LIGO experiment, to estimate the location of a black hole merger and, independent of other techniques, infer the value of the Hubble constant, a key cosmological parameter. Combining their data with other surveys, DES scientists have also been able to generate a complete map of the Milky Way’s dwarf satellites, giving researchers insight into how our own galaxy was assembled and how it compares with cosmologists’ predictions.

Covering 5,000 square degrees of the southern sky (one-eighth of the entire sky) and spanning billions of light-years, the survey data enables many other investigations in addition to those targeting dark energy, covering a vast range of cosmic distances — from discovering new nearby solar system objects to investigating the nature of the first star-forming galaxies in the early universe.

“This is a momentous milestone. For six years, the Dark Energy Survey collaboration took pictures of distant celestial objects in the night sky. Now, after carefully checking the quality and calibration of the images captured by the Dark Energy Camera, we are releasing this second batch of data to the public,” said DES Director Rich Kron of Fermilab and the University of Chicago. “We invite professional and amateur scientists alike to dig into what we consider a rich mine of gems waiting to be discovered.”

This irregular dwarf galaxy, named IC 1613, contains some 100 million stars (bluish in this portrayal). It is a member of our Local Group of galaxy neighbors, a collection which also includes our Milky Way, the Andromeda spiral and the Magellanic clouds. 2.4 million light-years away, it contains several examples of Cepheid variable stars — key calibrators of the cosmic distance ladder. The bulk of its stars were formed about 7 billion years ago, and it does not appear to be undergoing star formation at the present day, unlike other very active dwarf irregulars such as the Large and Small Magellanic clouds. To the lower right of IC 1613 (oriented with North to the left and East down in this view), one may view a background galaxy cluster (several hundred times more distant than IC 1613) consisting of dozens of orange-yellow blobs, centered on a pair of giant cluster elliptical galaxies. To the left of the irregular galaxy is a bright, sixth magnitude, foreground Milky Way star in the constellation of Cetus the Whale, identified here as a star by its sharp diffraction spikes radiating at 45 degree angles. For coordinate information, visit the NOIRLab webpage for this photo. Photo: DES/NOIRLab/NSF/AURA. Acknowledgments: Image processing: DES, Jen Miller (Gemini Observatory/NSF’s NOIRLab), Travis Rector (University of Alaska Anchorage), Mahdi Zamani & Davide de Martin

The primary tool in collecting these images, the DOE-built Dark Energy Camera, is mounted to the NSF-funded Víctor M. Blanco 4-meter Telescope, part of the Cerro Tololo Inter-American Observatory in the Chilean Andes, part of NSF’s NOIRLab. Each week, the survey collected thousands of pictures of the southern sky, unlocking a trove of potential cosmological insights.

Once captured, these images (and the large amount of data surrounding them) are transferred to the National Center for Supercomputing Applications for processing via the DES Data Management project. Using the Blue Waters supercomputer at NCSA, the Illinois Campus Cluster and computing systems at Fermilab, NCSA prepares calibrated data products for public and research consumption. It takes approximately four months to process one year’s worth of data into a searchable, usable catalog.

The detailed precision cosmology constraints based on the full six-year DES data set will come out over the next two years.

The DES DR2 is hosted at the Community Science and Data Center, a program of NOIRLab. CSDC provides software systems, user services and development initiatives to connect and support the scientific missions of NOIRLab’s telescopes, including the Blanco Telescope at Cerro Tololo Inter-American Observatory.

NCSA, NOIRLab and the LIneA Science Server collectively provide the tools and interfaces that enable access to DR2.

The Dark Energy Survey uses a 570-megapixel camera mounted on the Blanco Telescope, at the CTI Observatory in Chile, to image 5,000 square degrees of southern sky. Photo: Fermilab

“Because astronomical data sets today are so vast, the cost to handle them is prohibitive for individual researchers or most organizations. CSDC provides open access to big astronomical data sets like DES DR2 and the necessary tools to explore and exploit them — then all it takes is someone from the community with a clever idea to discover new and exciting science,” said Robert Nikutta, project scientist for Astro Data Lab at CSDC.

“With information on the positions, shapes, sizes, colors and brightnesses of over 690 million stars, galaxies and quasars, the release promises to be a valuable source for astronomers and scientists worldwide to continue their explorations of the universe, including studies of matter (light and dark) surrounding our home Milky Way galaxy, as well as pushing further to examine groups and clusters of distant galaxies, which hold precise evidence about how the size of the expanding universe changes over time,” said Dark Energy Survey Data Management Project Scientist Brian Yanny of Fermilab.

This work is supported in part by the U.S. Department of Energy Office of Science.

About DES

The Dark Energy Survey is a collaboration of more than 400 scientists from 26 institutions in seven countries. Funding for the DES Projects has been provided by the U.S. Department of Energy, the U.S. National Science Foundation, the Ministry of Science and Education of Spain, the Science and Technology Facilities Council of the United Kingdom, the Higher Education Funding Council for England, the National Center for Supercomputing Applications at the University of Illinois at Urbana-Champaign, the Kavli Institute of Cosmological Physics at the University of Chicago, Funding Authority for Studies and Projects in Brazil, Carlos Chagas Filho Foundation for Research Support of the State of Rio de Janeiro, Brazilian National Council for Scientific and Technological Development and the Ministry of Science, Technology and Innovation, the German Research Foundation and the collaborating institutions in the Dark Energy Survey, the list of which can be found at www.darkenergysurvey.org/collaboration.

About NSF’s NOIRLab

NSF’s NOIRLab (National Optical-Infrared Astronomy Research Laboratory), the US center for ground-based optical-infrared astronomy, operates the international Gemini Observatory (a facility of NSF, NRC–Canada, ANID–Chile, MCTIC–Brazil, MINCyT–Argentina and KASI–Republic of Korea), Kitt Peak National Observatory (KPNO), Cerro Tololo Inter-American Observatory (CTIO), the Community Science and Data Center (CSDC), and Vera C. Rubin Observatory. It is managed by the Association of Universities for Research in Astronomy (AURA) under a cooperative agreement with NSF and is headquartered in Tucson, Arizona. The astronomical community is honored to have the opportunity to conduct astronomical research on Iolkam Du’ag (Kitt Peak) in Arizona, on Maunakea in Hawaiʻi, and on Cerro Tololo and Cerro Pachón in Chile. We recognize and acknowledge the very significant cultural role and reverence that these sites have to the Tohono O’odham Nation, to the Native Hawaiian community, and to the local communities in Chile, respectively.

About NCSA

NCSA at the University of Illinois at Urbana-Champaign provides supercomputing and advanced digital resources for the nation’s science enterprise. At NCSA, University of Illinois faculty, staff, students, and collaborators from around the globe use advanced digital resources to address research grand challenges for the benefit of science and society. NCSA has been advancing one third of the Fortune 50® for more than 30 years by bringing industry, researchers, and students together to solve grand challenges at rapid speed and scale. For more information, please visit www.ncsa.illinois.edu.

About Fermilab

Fermilab is America’s premier national laboratory for particle physics and accelerator research. A U.S. Department of Energy Office of Science laboratory, Fermilab is located near Chicago, Illinois, and operated under contract by the Fermi Research Alliance LLC, a joint partnership between the University of Chicago and the Universities Research Association, Inc. Visit Fermilab’s website at www.fnal.gov and follow us on Twitter at @Fermilab.

The DOE Office of Science is the single largest supporter of basic research in the physical sciences in the United States and is working to address some of the most pressing challenges of our time. For more information, please visit science.energy.gov.

The U.S. Department of Energy Office of Science has awarded funding to Fermilab to use machine learning to improve the operational efficiency of Fermilab’s particle accelerators.

These machine learning algorithms, developed for the lab’s accelerator complex, will enable the laboratory to save energy, provide accelerator operators with better guidance on maintenance and system performance, and better inform the research timelines of scientists who use the accelerators.

Engineers and scientists at Fermilab, home to the nation’s largest particle accelerator complex, are designing the programs to work at a systemwide scale, tracking all of the data resulting from the operation of the complex’s nine accelerators. The pilot system will be used on only a few accelerators, with the plan to extend the program tools to the entire accelerator chain.

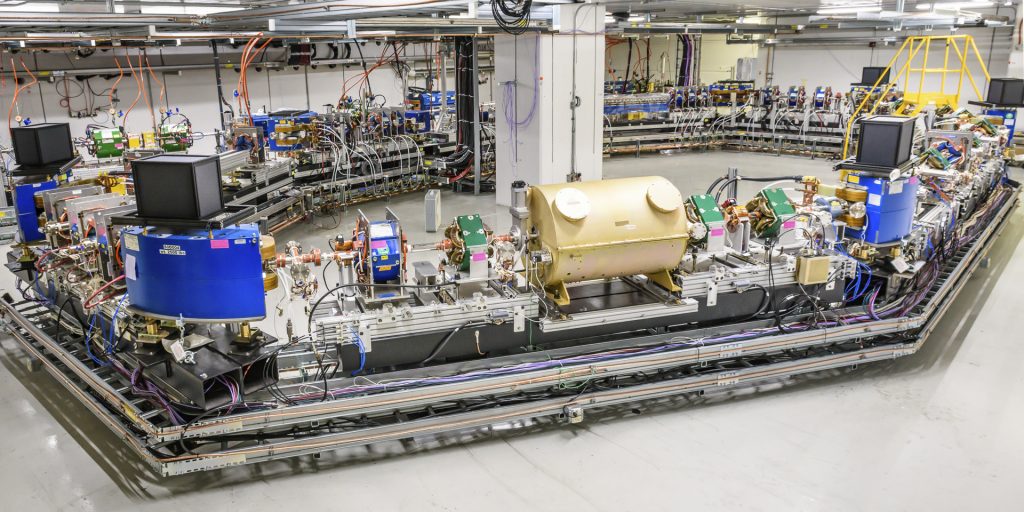

Engineers and scientists at Fermilab are designing machine learning programs for the lab’s accelerator complex. These algorithms will enable the laboratory to save energy, give accelerator operators better guidance on maintenance and system performance, and better inform the research timelines of scientists who use the accelerators. The pilot system will used on the Main Injector and Recycler, pictured here. It will eventually be extended to the entire accelerator chain. Photo: Reidar Hahn, Fermilab

Fermilab engineer Bill Pellico and Fermilab accelerator scientist Kiyomi Seiya are leading a talented team of engineers, software experts and scientists in tackling the deployment and integration of framework that uses machine learning on two distinct fronts.

Untangling complex data

Creating a machine learning program for a single particle accelerator is difficult, says Fermilab engineer Bill Pellico, and Fermilab is home to nine. The work requires that operators track all the individual accelerator subsystems at once.

“Manually keeping track of every subsystem at the level required for optimum performance is impossible,” Pellico said. “There’s too much information coming from all these different systems. To monitor the hundreds of thousands of devices we have and make some sort of correlation between them requires a machine learning program.”

The diagnostic signals pinging from individual subsystems form a vast informational web, and the data appear on screens that cover the walls of Fermilab’s Main Control Room, command central for the accelerator complex.

Particle accelerator operators track all the individual accelerator subsystems at once. The diagnostic signals pinging from individual subsystems form a vast informational web, and the data appear on screens that cover the walls of Fermilab’s Main Control Room, command central for the accelerator complex, pictured here. A machine learning program will not only track signals, but also quickly determine what specific system requires an operator’s attention. Photo: Reidar Hahn, Fermilab

The data — multicolored lines, graphs and maps — can be overwhelming. On one screen an operator may see data requests from scientists who use the accelerator facility for their experiments. Another screen might show signals that a magnet controlling the particle beam in a particular accelerator needs maintenance.

Right now, operators must perform their own kind of triage – identifying and prioritizing the most important signals coming in – ranging from fulfilling a researcher request to identifying a problem-causing component. A machine learning program, on the other hand, will be capable not only of tracking signals, but also of quickly determining what specific system requires attention from an operator.

“Different accelerator systems perform different functions that we want to track all on one system, ideally,” Pellico said. “A system that can learn on its own would untangle the web and give operators information they can use to catch failures before they occur.”

In principle, the machine learning techniques, when employed on such a large amount of data, would be able to pick up on the minute, unusual changes in a system that would normally get lost in the wave of signals accelerator operators see from day to day. This fine-tuned monitoring would, for example, alert operators to needed maintenance before a system showed outward signs of failure.

Say the program brought to an operator’s attention an unusual trend or anomaly in a magnet’s field strength, a possible sign of a weakening magnet. The advanced alert would enable the operator to address it before it rippled into a larger problem for the particle beam or the lab’s experiments. These efforts could also help lengthen the life of the hardware in the accelerators.

Improving beam quality and increasing machine uptime

While the overarching machine learning framework will process countless pieces of information flowing through Fermilab’s accelerators, a separate program will keep track of (and keep up with) information coming from the particle beam itself.

Any program tasked with monitoring a speed-of-light particle beam in real time will need to be extremely responsive. Seiya’s team will code machine learning programs onto fast computer chips to measure and respond to beam data within milliseconds.

Currently, accelerator operators monitor the overall operation and judge whether the beam needs to be adjusted to meet the requirements of a specific experiment, while computer systems help keep the beam stable locally. On the other hand, a machine learning program will be able to monitor both global and local aspects of the operation, pick up on critical beam information in real time, leading to faster improvements to beam quality and reduced beam loss — the inevitable loss of beam to the beam pipe walls.

“Information from the accelerator chain will pass through this one system,” Seiya said, Fermilab scientist leading the machine learning program for beam quality. “The machine learning model will then be able to figure out the best action for each.”

That best action depends on what aspect of the beam needs to be adjusted in a given moment. For example, monitors along the particle beamline will send signals to the machine-learning-based program, which will be able to determine whether the particles need an extra kick to ensure they’re traveling in the optimal position in the beam pipe. If something unusual occurs in the accelerator, the program can decide whether the beam has to be stopped or not by scanning the patterns of the monitors.

A tool for everyone

And while every accelerator has its particular needs, the accelerator-beam machine learning program implemented at Fermilab could be adapted for any particle accelerator, providing an example for operators at particle accelerators beyond Fermilab.

“We’re ultimately creating a tool set for everyone to use,” Seiya said. “These techniques will bring new and unique capabilities to accelerator facilities everywhere. The methods we develop could also be implemented at other accelerator complexes with minimal tweaks.”

This tool set will also save energy for the laboratory. Pellico estimates that 7% of an accelerator’s energy goes unused due to a combination of suboptimal operation, unscheduled maintenance and unnecessary equipment usage. This isn’t surprising considering the number of systems required to run an accelerator. Improvements in energy conservation make for greener accelerator operation, he said, and machine learning is how the lab will get there.

“The funding from DOE takes us a step closer to that goal,” he said.

The capabilities of this real-time tuning will be demonstrated on the beamline for Fermilab’s Mu2e experiment and in the Main Injector, with the eventual goal of incorporating the program into all Fermilab experiment beamlines. That includes the beam generated by the upcoming PIP-II accelerator, which will become the heart of the Fermilab complex when it comes online in the late 2020s. PIP-II will power particle beams for the international Deep Underground Neutrino Experiment, hosted by Fermilab, as well as provide for the long-term future of the Fermilab research program.

Both of the lab’s new machine learning applications, working in tandem, will benefit accelerator operation and the laboratory’s particle physics experiments. By processing patterns and signals from large amounts of data, machine learning gives scientists the tools they need to produce the best research possible.

The Office of Science is the single largest supporter of basic research in the physical sciences in the United States and is working to address some of the most pressing challenges of our time. For more information, visit science.energy.gov.

Before researchers can smash together beams of particles to study high-energy particle interactions, they need to create those beams in particle accelerators. And the tighter the particles are packed in the beams, the better scientists’ chances of spotting rare physics phenomena.

Making a particle beam denser or brighter is akin to sticking an inflated balloon in the freezer. Just as reducing the random motion of the gas molecules inside the balloon causes the balloon to shrink, reducing the random motion of the particles in a beam makes the beam denser. But physicists do not have freezers for particles moving near the speed of light — so they devise other clever ways to cool down the beam.

An experiment underway at Fermilab’s Integrable Optics Test Accelerator seeks to be the first to demonstrate optical stochastic cooling, a new beam cooling technology that has the potential to dramatically speed up the cooling process. If successful, the technique would enable future experiments to generate brighter beams of charged particles and study previously inaccessible physics.

Fermilab’s optical stochastic cooling experiment is now underway at the 40-meter-circumference Integrable Optics Test Accelerator, a versatile particle storage ring designed to pursue innovations in accelerator science. Photo: Giulio Stancari, Fermilab

“There is this range of energies — about 10 to 1,000 GeV — where there currently exists no technology for cooling protons, and that’s where optical stochastic cooling could be applied at the moment,” said Fermilab scientist Alexander Valishev, the leader of the team that designed and constructed IOTA. “But if we develop it, then I’m sure there will be other applications.”

In January, IOTA’s OSC experiment started taking data. IOTA is supported by the U.S. Department of Energy Office of Science.

OSC operates on the same principle as conventional stochastic cooling, a technology developed by Simon van der Meer and harnessed by Carlo Rubbia for the 1983 discovery of the W and Z bosons. Van der Meer and Rubbia won the 1984 Nobel Prize in physics for their work, which has since found use in many particle accelerators.

Stochastic cooling provides a way to measure how the particles in a beam move away from the desired trajectory and apply corrections to nudge them closer together, thus making the beam denser. The technique hinges on the interplay between charged particles and the electromagnetic radiation they emit.

As charged particles such as electrons or protons move in a curved path, they radiate energy in the form of light, which a pickup in the accelerator detects. Each light signal contains information about the average position and velocity of a “bunch” of millions or billions of particles.

Then an electromagnet device called a kicker applies this same signal back onto the bunch to correct any stray motion, like a soccer player kicking a ball to keep it in bounds. Each kick brings the average particle position and velocity closer to the desired value, but individual particles can still drift away. To correct the motion of individual particles and create a dense beam, the process must be repeated many thousands of times as the beam circulates in the accelerator.

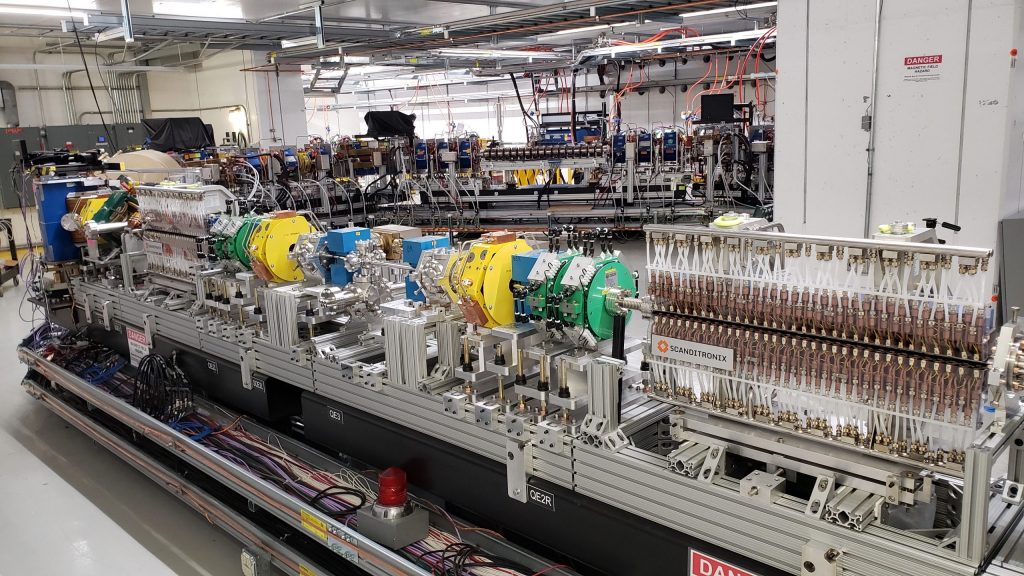

The two components with Scanditronix logos pictured here are the heart of IOTA’s optical stochastic cooling experiment. The pickup (left) measures the spread of the particle beam, and then the kicker (right) corrects the stray motion of the particles. Photo: Jonathan Jarvis, Fermilab

Traditional stochastic cooling uses electromagnetic signals in the microwave range, with centimeter-long wavelengths. OSC uses visible and infrared light, with wavelengths around a micron — a millionth of a meter.

“The scale is set by the wavelength,” Valishev said. “The shorter wavelengths mean we can read the beam information with higher resolution and better pinpoint corrections.”

The higher resolution allows OSC to provide more precise kicks to smaller groups of particles. Smaller groups of particles require fewer kicks in order to cool, just as a tiny balloon cools faster than a large one when put in the freezer. Each particle gets kicked once per lap around the accelerator. Since fewer kicks are required, the entire beam cools after fewer laps.

In principle, OSC could speed up beam cooling by a factor of 10,000 compared to conventional stochastic cooling. The first demonstration experiment at IOTA, which is using a medium-energy electron beam, has a more modest goal. As the beam circulates in the accelerator and radiates light, it loses energy, cooling on its own in about 1 second; IOTA seeks a tenfold decrease in that cooling time.

Proposals for OSC piqued the interest of the accelerator community as early as the 1990s, but so far a successful implementation has eluded researchers. Harnessing shorter wavelengths of light raises a host of technical challenges.

“The relative positions of all the relevant elements need to be controlled at the level of a quarter of a wavelength or better,” Valishev said. “In addition to that, you have to read the wave packet from the beam, and then you have to transport it, amplify it, and then apply it back onto the same beam. Again, everything must be done with this extreme precision.”

IOTA proved the perfect accelerator for the job. The centerpiece of the Fermilab Accelerator Science and Technology facility, IOTA has a flexible design that allows researchers to tailor the components in the beamline as they push the frontiers of accelerator science.

IOTA’s OSC experiment is beginning with electrons because these lightweight particles can easily and cheaply be accelerated to the speeds at which they radiate visible and infrared light. In the future, scientists hope to apply the technique to protons. Due to their larger mass, protons must reach higher energies to radiate the desired light, making them more difficult to handle.

At first, IOTA will study passive cooling, in which the light emitted by the electron beam will not be amplified before being shined back on the beam. After that simplified approach succeeds, the team will add optical amplifiers to strengthen the light that provides the corrective kicks.

In addition to providing a new cooling technology for high-energy particle colliders, OSC could enhance the study of fundamental electrodynamics and interactions between electrons and photons.

“Optical stochastic cooling is a blend of various areas of modern experimental physics, from accelerators and beams to light optics, all merged in one package,” Valishev said. “That makes it very challenging and also very stimulating to work on.”

The Office of Science is the single largest supporter of basic research in the physical sciences in the United States and is working to address some of the most pressing challenges of our time. For more information, visit science.energy.gov.