In a little over a month, a team of physicists and engineers from around the world took a simplified ventilator design from concept all the way through approval by the U.S. Food and Drug Administration. This major milestone marks the ventilator as safe for use in the United States under the FDA’s Emergency Use Authorization, which helps support public health during a crisis.

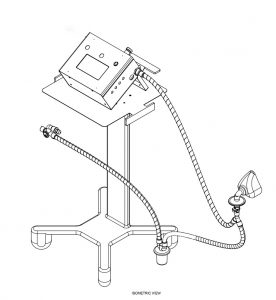

Physicists and engineers from nine countries helped build the Mechanical Ventilator Milano, which has been approved for use by the U.S. Food and Drug Administration. Image: MVM collaboration

The Mechanical Ventilator Milano, or MVM, is the brainchild of physicist Cristiano Galbiati. The Gran Sasso Science Institute and Princeton University professor, who normally leads a dark matter experiment in Italy called DarkSide-20k, found himself in lockdown in Milan, a city hit hard by COVID-19. Hearing reports of ventilator shortages and wanting to help, Galbiati reached out to fellow researchers to develop a ventilator with minimal components that could be quickly produced using commonly available parts.

“The sense of crisis was palpable, and I knew the availability of ventilators was critical,” said Galbiati, who obtained his Ph.D from the University of Milan. “We had been doing some complicated projects in physics that required working with gases, and I thought it our duty to find a way to push oxygen into the lungs of patients.”

Word spread quickly, with engineers and physicists in nine countries – especially Italy, the United States and Canada – joining in to help. At the U.S. Department of Energy’s Fermilab, the researchers who typically spend their days building and running delicate detectors quickly applied their skills and volunteered their time to build a device for delicate lungs.

“There’s a huge benefit we’ve gained from the way particle physics collaborations work,” said Steve Brice, the head of Fermilab’s Neutrino Division. “The structure already in place has large, international, multidisciplinary groups. We can re-task that structure to work on something different, and you can move much more quickly.”

The MVM is inspired by the Manley ventilator built in the 1960s. The design is simple, inexpensive, compact and requires only compressed oxygen (or medical air) and a source of electrical power to run. The modern twist comes from the electronics and the control system.

“We’re concentrating on the software and letting the hardware be as minimal as it can be,” said Stephen Pordes, a member of DarkSide and a Fermilab scientist stationed at CERN to work on a prototype detector for the Deep Underground Neutrino Experiment, known as DUNE. He has volunteered his time to coordinate some of the MVM efforts alongside Cary Kendziora from Fermilab’s Particle Physics Division. “This project has been growing organically. People see a place where there is a need, and they take their own initiative to help and jump in.”

And volunteers have jumped in with a wide range of skills. Fermilab technical editor Anne Heavey joined to work on documentation and the user manual, repurposing the formatting of the recently published Technical Design Report for DUNE. Elena Gramellini, an Italian neutrino physicist working at Fermilab, liaised with doctors on the front lines in Italy. Jen Raaf, a neutrino physicist who works on liquid-argon experiments, worked with the medical device manufacturer Elemaster and led the effort to bring together all the elements needed for FDA approval.

“I wanted to feel like I was doing something useful,” Raaf said. “It’s really nice to see humankind doing good things for other people.”

The project has not been limited to the researchers. While working through the prototypes, the team made sure to engage doctors, medical device manufacturers and regulators to ensure they were making something useful for hospitals, creating something with a robust supply chain that could be quickly produced and building the ventilator to the right specifications.

“One of the key problems was translating between what the machine technically does and how operators would interact with the machine itself,” Gramellini said. “It’s been a lot of learning on my part in trying to see the ventilator the way that a clinician would operate the machine.”

Experts from industry and medicine made themselves readily available for consults; doctors tested the MVM prototypes on breathing simulators. Underlying all the long extra hours and stress of the intense project was a sense of urgency and motivation for all involved.

“I have never seen anything come together at this speed,” Heavey said. “It’s just phenomenal.”

With collaborators spread across 10 different time zones, work on various systems was able to proceed nearly around the clock, allowing MVM to progress from posting a preprint paper on March 23 to FDA approval on May 1.

“It’s in our DNA to collaborate across borders and in real-time as particle physicists,” Galbiati said. “As borders went up and supply chains became more difficult, it remained a beacon of hope to me to be able to collaborate internationally. It is important to see that while the virus is spreading around the world at the speed of jets, the research is spreading at the speed of the internet. And if there’s one way that the virus will be defeated, it’s if the research can prevail.”

While physicists are used to collaborating from a distance, doing so while teleworking and social distancing added a new wrinkle. Working from home, researchers didn’t have access to all the tools they would have in the lab – or all the parts they needed to test in one place. Instead, they connected various components over the internet. So a microcontroller in Italy could connect and receive software written in the United States, then have someone test the interface on a touch screen in Canada.

“If you had asked me before all this if it was possible, I would have said no, it would take months,” said Marco Del Tutto, a neutrino physicist at Fermilab who worked on the software and microcontrollers along with Scientific Computing Division’s Gennadiy Lukhanin. “I’ve never done anything like this before, and we weren’t sure if we would succeed. But we all agreed we should at least try. We owed that to everyone.”

By early April, completed prototype MVM units in temporary 3-D-printed cases were making their way through rigorous tests in Italy and with collaborators around the world – and they worked. Eric Dahl, a Fermilab and Northwestern University scientist, was able to use a breathing simulator at Northwestern Simulation (a clinical training center part of Feinberg School of Medicine) to test one of the first prototypes and provide input on the way to the approved design. The MVM now works properly in two modes: full ventilation of a patient and breathing support.

“This effort is the demonstration that the particle physics community pays attention to the application of basic research for social needs,” said Fernando Ferroni, professor at Gran Sasso Science Institute and the past president of INFN, Italy’s National Institute for Nuclear Physics. “Having applied the efforts of hundreds of people in a very efficient fashion was possible because of the level of organization and shared vision of this community. It’s an amazing result, indeed.”

The end result is an open-source ventilator with off-the-shelf parts that the MVM team hopes will close the gap between supply and demand on a short timescale. The hardware and software designs will be made publicly accessible, so in principle, anyone in the world could make their own version. Galbiati is now working with Elemaster and other manufacturers on the first bulk production and getting ventilators to where they are needed most.

“It has been wonderful to work with such a highly skilled and very motivated group of scientists and engineers,” said Art McDonald, Nobel laureate and head of Canada’s involvement in MVM. “Everyone has been working hard on this because they see it as a way that they can use their skills to help out in this worldwide crisis. We are very grateful for the contributions by our team members and for all the external support that we have received.”

Learn more about Fermilab’s efforts in the fight against COVID-19.

Read the full press release from the MVM collaboration.

Fermilab is America’s premier national laboratory for particle physics and accelerator research. A U.S. Department of Energy Office of Science laboratory, Fermilab is located near Chicago, Illinois, and operated under contract by the Fermi Research Alliance LLC, a joint partnership between the University of Chicago and the Universities Research Association, Inc. Visit Fermilab’s website at www.fnal.gov and follow us on Twitter at @Fermilab.

Fermilab is supported by the Office of Science of the U.S. Department of Energy. The Office of Science is the single largest supporter of basic research in the physical sciences in the United States and is working to address some of the most pressing challenges of our time. For more information, visit science.energy.gov.

The international Deep Underground Neutrino Experiment, hosted by Fermilab, will be one of the most ambitious attempts ever made at understanding some of the most fundamental questions about our universe. Currently under construction at the Sanford Underground Research Facility in South Dakota, DUNE will provide a massive target for neutrinos. When it’s operational, DUNE will comprise around 70,000 tons of liquid argon — more than enough to fill a dozen Olympic-sized swimming pools — contained in cryogenic tanks nearly a mile underground.

Neutrinos are ubiquitous. They were formed in the first seconds after the Big Bang, even before atoms could form, and they are constantly being produced by nuclear reactions in stars. When massive stars explode and become supernovae, the vast majority of the energy given off in the blast is released as a burst of neutrinos.

In the laboratory, scientists use particle accelerators to make neutrinos. In DUNE’s case, Fermilab accelerators will generate the world’s most powerful high-energy neutrino beam, aiming it at the DUNE neutrino detector 800 miles (1,300 kilometers) away in South Dakota.

When any of these neutrinos — star-born or terrestrial — strikes one of the argon atoms in the DUNE detector, a cascade of particles results. Every time this happens, billions of detector digits are generated, which must be saved and analyzed further by collaborators over the world. The resulting data that will be churned out by the detector will be immense. So, while construction continues in South Dakota, scientists around the world are hard at work developing the computing infrastructure necessary to handle the massive volumes of data the experiment will produce.

The goal of the DUNE Computing Consortium is to establish a global computing network that can handle the massive data dumps DUNE will produce by distributing them across the grid. Photo: Reidar Hahn, Fermilab

The first step is ensuring that DUNE is connected to Fermilab with the kind of bandwidth that can carry tens of gigabits of data per second, said Liz Sexton-Kennedy, Fermilab’s chief information officer. As with other aspects of the collaboration, it requires “a well-integrated partnership,” she said. Each neutrino collision in the detector will produce an array of information to be analyzed.

“When there’s a quantum interaction at the center of the detector, that event is physically separate from the next one that happens,” Sexton-Kennedy said. “And those two events can be processed in parallel. So, there has to be something that creates more independence in the computing workflow that can split up the work.”

Sharing the load

One way to approach this challenge is by distributing the workflow around the world. Mike Kirby of Fermilab and Andrew McNab of the University of Manchester in the UK are the technical leads of the DUNE Computing Consortium, a collective effort by members of the DUNE collaboration and computing experts at partner institutions. Their goal is to establish a global computing network that can handle the massive data dumps DUNE will produce by distributing them across the grid.

“We’re trying to work out a roadmap for DUNE computing in the next 20 years that can do two things,” Kirby said. “One is an event data model,” which means figuring out how to handle the data the detector produces when a neutrino collision occurs, “and the second is coming up with a computing model that can use the conglomerations of computing resources around the world that are being contributed by different institutions, universities and national labs.”

It’s no small task. The consortium includes dozens of institutions, and the challenge is ensuring the computers and servers at each are orchestrated together so that everyone on the project can carry out their analyses of the data. A basic challenge, for example, is making sure a computer in Switzerland or Brazil recognizes a login from a computer at Fermilab.

Coordinating computing resources across a distributed grid has been done before, most notably by the Worldwide LHC Computing Grid, which federates the United States’ Open Science Grid and others around the world. But this is the first time an experiment at this scale led by Fermilab has used this distributed approach.

“Much of the Worldwide LHC Computing Grid design assumes data originates at CERN and that meetings will default to CERN, but as DUNE now has an associate membership of WLCG things are evolving,” said Andrew McNab, DUNE’s international technical lead for computing. “One of the first steps was hosting the monthly WLCG Grid Deployment Board town hall at Fermilab last September, and DUNE computing people are increasingly participating in WLCG’s task forces and working groups.”

“We’re trying to build on a lot of the infrastructure and software that’s already been developed in conjunction with those two efforts and extend it a little bit for our specific needs,” Kirby said. “It’s a great challenge to coordinate all of the computing around the world. In some sense, we’re kind of blazing a new trail, but in many ways, we are very much reliant on a lot of the tools that were already developed.”

Coordinating computing resources across a distributed grid has been done before — but this is the first time an experiment at this scale led by Fermilab has used this approach.

Supernovae signals

Another challenge is that DUNE has to organize the data it collects differently from particle accelerator physics experiments.

“For us, a typical neutrino event from the accelerator beam is going to generate something on the order of six gigabytes of data,” Kirby said. “But if we get a supernova neutrino alert,” in which a neutrino burst from a supernova arrives, signaling the cosmic explosion before light from it arrives at Earth, “a single supernova burst record could be as much as 100 terabytes of data.”

One terabyte equals one trillion bytes, an amount of data equal to about 330 hours of Netflix movies. Created in a few seconds, that amount of data is a huge challenge because of the computer processing time needed to handle it. DUNE researchers must begin recording data soon after a neutrino alert is triggered, and it adds up quickly. But it will also offer an opportunity to learn about neutrino interactions that take place inside supernovae while they’re exploding.

McNab said DUNE’s computing requirements are also slightly different because the size of each of the events it will capture is typically 100 times larger than the LHC experiments like ATLAS or CMS.

“So, the computers need more memory — not 100 times more, because we can be clever about how we use it, but we’re pushing the envelope certainly,” McNab said. “And that’s before we even start talking about the huge events if we see a supernova.”

Georgia Karagiorgi, a physicist at Columbia University who leads data selection efforts for the DUNE Data Acquisition Consortium, said a nearby supernova will generate up to thousands of interactions in the DUNE detector.

“That will allow us to answer questions we have about supernova dynamics and about the properties of neutrinos themselves,” she said.

To do so, DUNE scientists will have to combine data on the timing of neutrino arrival, their abundance and what kinds of neutrinos are present.

“If neutrinos have weird, new types of interactions as they’re propagating through the supernova during the explosion, we might expect modifications to the energy distribution of those neutrinos as a function of time” as they are picked up by the detector, Karagiorgi said. “That goes hand-in-hand with very detailed, and also quite computationally intensive, simulations, with different theoretical assumptions going into them, to actually be able to extract our science. We need both the theoretical simulations and the actual data to make progress.”

Gathering that data is a huge endeavor. When a supernova event occurs, “we read out our far-detector modules for about 100 seconds continuously,” Kirby said.

Because the scientists don’t know when a supernova will happen, they have to start collecting data as soon as an alert occurs and could be waiting for 30 seconds or longer for the neutrino burst to conclude. All the while, data could be piling up.

To prevent too much buildup, Kirby said, the experiment will use an approach called a circular buffer, in which memory that doesn’t include neutrino hits is reused, not unlike rewinding and recording over the tape in a video cassette.

McNab said the supernovae aspect of DUNE is also presenting new opportunities for computing collaboration.

“I’m a particle physicist by training, and one of my favorite aspects about working on this project is that way that it connects to other scientific disciplines, particularly astronomy,” he said. In the UK, particle physics and astronomy computing are collectively providing support for DUNE, the Vera C. Rubin Observatory Legacy Survey of Space and Time, and the Square Kilometer Array radio telescopes on the same computers. “And then we have the science aspect that, if we do see a supernova, then we will hopefully be viewing it with multiple wavelengths using these different instruments. DUNE provides an excellent pathfinder for the computing, because we already have real data coming from DUNE’s prototype detectors that needs to be processed.”

Kirby said that the computing effort is leading to exciting new developments in applications on novel architectures, artificial intelligence and machine learning on diverse computer platforms.

“In the past, we’ve focused on doing all of our data processing and analysis on CPUs and standard Intel and PC processors,” he said. “But with the rise of GPUs [graphics processing units] and other computing hardware accelerators such as FPGAs [field-programmable gate arrays] and ASICs [application-specific integrated circuits], software has been written specifically for those accelerators. That really has changed what’s possible in terms of event identification algorithms.”

These technologies are already in use for the on-site data acquisition system in reducing the terabytes per second generated by the detectors down to the gigabytes per second transferred offline. The challenge that remains for offline is figuring out how to centrally manage these applications across the entire collaboration and get answers back from distributed centers across the grid.

“How do we stitch all of that together to make a cohesive computing model that gets us to physics as fast as possible?” Kirby said. “That’s a really incredible challenge.”

This work is supported by the Department of Energy Office of Science.

Fermilab is supported by the Office of Science of the U.S. Department of Energy. The Office of Science is the single largest supporter of basic research in the physical sciences in the United States and is working to address some of the most pressing challenges of our time. For more information, please visit energy.gov/science.