Leon Lederman stands outside Wilson Hall at Fermilab on the day he learned he was awarded the 1988 Nobel Prize.

Leon Lederman was one of a kind.

He was a brilliant physicist, winning the Nobel Prize in 1988 for the discovery of the muon neutrino. He was one of the founders and served as the second director of the U.S. Department of Energy’s Fermi National Accelerator Laboratory in Batavia. As a champion for science education, he helped start the Illinois Math and Science Academy (IMSA) in Aurora. As an educator himself, he spent decades teaching at the Illinois Institute of Technology, inspiring young minds to consider physics as a career.

He was also, according to the people who knew him, a character in the best sense of the word. Many of those people will be on hand on Wednesday, Sept. 25, at 6 p.m. for a special event celebrating Lederman’s life and legacy and looking forward to the future of particle physics. Presented by the Chicago Council on Science and Technology and Fermilab, in conjunction with the Chicago Public Library, the program will include presentations, a question-and-answer panel with physicists and a miniature physics slam featuring students from IMSA.

On hand to share stories and discuss Lederman’s life will be two scientists who worked closely with him, Rocky Kolb and Michael Turner of the University of Chicago, who started Fermilab’s astrophysics program under Lederman’s leadership. Fermilab Deputy Director Joe Lykken will give an overview of the laboratory’s work from Lederman’s era to the present and the future, and Fermilab scientists Kirsty Duffy, Jessica Esquivel and Don Lincoln will join Turner on the ask-a-physicist panel.

As a special treat, three IMSA students will join three Fermilab researchers in a physics slam, a fun competition that pits teams against one another to see which one can explain a scientific concept or principle in the most entertaining and informative way. The audience will then choose the winner. Fermilab hosts a physics slam every year as part of its Arts and Lecture Series, and it is one of the lab’s most popular events.

This event is free of charge and will be held in Pritzker Auditorium at the Harold Washington Library, 400 State Street, in Chicago. Registration is required for this event. For more information please visit the C2ST website.

Fermilab is America’s premier national laboratory for particle physics and accelerator research. A U.S. Department of Energy Office of Science laboratory, Fermilab is located near Chicago, Illinois, and operated under contract by the Fermi Research Alliance LLC. Visit Fermilab’s website at www.fnal.gov and follow us on Twitter at @Fermilab.

Chicago Council on Science and Technology (C2ST) is a not-for-profit organization that brings researchers, scientists and STEM professionals to you. Our goal is to reignite excitement and passion for science and technology and highlight their relevance in our lives. Visit our website, c2st.org, for a complete listing of our program offerings.

The DOE Office of Science is the single largest supporter of basic research in the physical sciences in the United States and is working to address some of the most pressing challenges of our time. For more information, please visit https://energy.gov/science.

To build the next generation of powerful proton accelerators, scientists need the strongest magnets possible to steer particles close to the speed of light around a ring. For a given ring size, the higher the beam’s energy, the stronger the accelerator’s magnets need to be to keep the beam on course.

Scientists at the Department of Energy’s Fermilab have announced that they achieved the highest magnetic field strength ever recorded for an accelerator steering magnet, setting a world record of 14.1 teslas, with the magnet cooled to 4.5 kelvins or minus 450 degrees Fahrenheit. The previous record of 13.8 teslas, achieved at the same temperature, was held for 11 years by Lawrence Berkeley National Laboratory.

That’s more than a thousand times stronger magnet than the refrigerator magnet that’s holding your grocery list to your refrigerator.

The achievement is a remarkable milestone for the particle physics community, which is studying designs for a future collider that could serve as a potential successor to the powerful 17-mile-around Large Hadron Collider operating at CERN laboratory since 2009. Such a machine would need to accelerate protons to energies several times higher than those at the LHC.

And that calls for steering magnets that are stronger than the LHC’s, about 15 teslas.

“We’ve been working on breaking the 14-tesla wall for several years, so getting to this point is an important step,” said Fermilab scientist Alexander Zlobin, who leads the project at Fermilab. “We got to 14.1 teslas with our 15-tesla demonstrator magnet in its first test. Now we’re working to draw one more tesla from it.”

The success of a future high-energy hadron collider depends crucially on viable high-field magnets, and the international high-energy physics community is encouraging research toward the 15-tesla niobium-tin magnet.

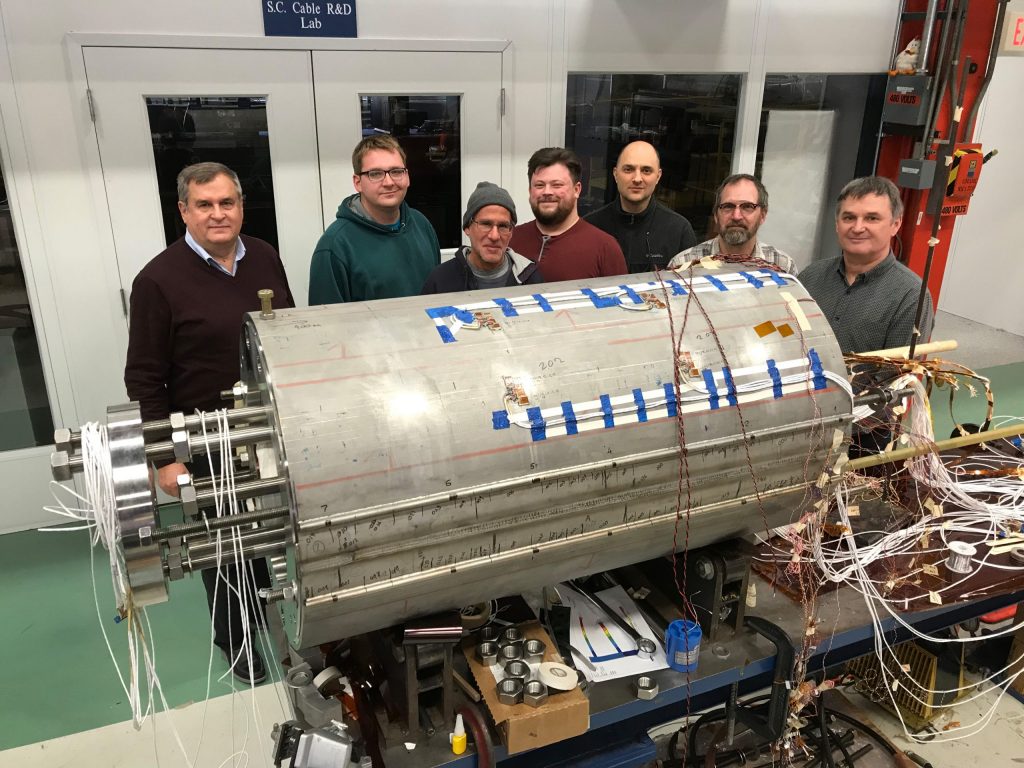

Fermilab recently achieved a magnetic field strength of 14.1 teslas at 4.5 kelvins on an accelerator steering magnet — a world record. Photo: Thomas Strauss

At the heart of the magnet’s design is an advanced superconducting material called niobium-tin.

Electrical current flowing through the material generates a magnetic field. Because the current encounters no resistance when the material is cooled to very low temperature, it loses no energy and generates no heat. All of the current contributes to the creation of the magnetic field. In other words, you get lots of magnetic bang for the electrical buck.

The strength of the magnetic field depends on the strength of the current that the material can handle. Unlike the niobium-titanium used in the current LHC magnets, niobium-tin can support the amount of current needed to make 15-tesla magnetic fields. But niobium-tin is brittle and susceptible to break when subject to the enormous forces at work inside an accelerator magnet.

So the Fermilab team developed a magnet design that would shore up the coil against every stress and strain it could encounter during operation. Several dozen round wires were twisted into cables in a certain way, enabling it to meet the requisite electrical and mechanical specifications. These cables were wound into coils and heat-treated at high temperatures for approximately two weeks, with a peak temperature of about 1,200 degrees Fahrenheit, to convert the niobium-tin wires into superconductor at operation temperatures. The team encased several coils in a strong innovative structure composed of an iron yoke with aluminum clamps and a stainless-steel skin to stabilize the coils against the huge electromagnetic forces that can deform the brittle coils, thus degrading the niobium-tin wires.

The Fermilab group took every known design feature into consideration, and it paid off.

“This is a tremendous achievement in a key enabling technology for circular colliders beyond the LHC,” said Soren Prestemon, a senior scientist at Berkeley Lab and director of the multilaboratory U.S. Magnet Development Program, which includes the Fermilab team. “This is an exceptional milestone for the international community that develops these magnets, and the result has been enthusiastically received by researchers who will use the beams from a future collider to push forward the frontiers of high-energy physics.”

And the Fermilab team is geared up to make their mark in the 15-tesla territory.

“There are so many variables to consider in designing a magnet like this: the field parameters, superconducting wire and cable, mechanical structure and its performance during assembly and operation, magnet technology, and magnet protection during operation,” Zlobin said. “All of these issues are even more important for magnets with record parameters.”

Over the next few months, the group plans to reinforce the coil’s mechanical support and then retest the magnet this fall. They expect to achieve the 15-tesla design goal.

And they’re setting their sights even higher for the future.

“Based on the success of this project and the lessons we learned, we’re planning to advance the field in niobium-tin magnets for future colliders to 17 teslas,” Zlobin said.

It doesn’t stop there. Zlobin says they may be able to design steering magnets that reach a field of 20 teslas using special inserts made of new advanced superconducting materials.

Call it a field goal.

The project is supported by the Department of Energy Office of Science. It is a key part of the U.S. Magnet Development Program, which includes Fermilab, Brookhaven National Laboratory, Lawrence Berkeley National Laboratory and the National High Magnetic Field Laboratory.

See other science results from Fermilab.

Media contacts

- Andre Salles, Fermilab Office of Communication, media@fnal.gov, +1-630-840-3351

- Renée Dillinger-Reiter, PRISMA+ Cluster of Excellence, Johannes Gutenberg University Mainz, renee.dillinger@uni-mainz.de, +49 6131 39-21845

Neutrinos are among the most abundant particles in nature, yet very little is known about these mysterious particles and their role in the universe. Researchers think neutrinos might hold the key to understanding why matter exists and how an exploding star transitions into a black hole.

Now the Johannes Gutenberg University Mainz, Germany, which has conducted neutrino research for many years, has taken a significant step to participate in the next big neutrino experiment: the Deep Underground Neutrino Experiment, hosted by Fermi National Accelerator Laboratory in the United States. More than 1,000 scientists from over 30 countries are collaborating on DUNE.

The two institutions have announced that they have signed an agreement to jointly appoint an internationally renowned researcher who will strengthen the experimental particle physics research program at JGU Mainz and advance a German contribution to DUNE.

This is the first Fermilab joint agreement with a university in Germany.

Fermilab and Johannes Gutenberg University Mainz have signed an agreement to advance a German contribution to the international Deep Underground Neutrino Experiment, hosted by Fermilab. This is the first Fermilab joint agreement with a university in Germany.

“This success underlines, once again, the exceptional reputation of the PRISMA+ Cluster of Excellence at our university and the outstanding quality of research undertaken there, thus confirming the international standing of our physicists in Mainz,” states Prof. Georg Krausch, President of Johannes Gutenberg University Mainz.

JGU Mainz and other German research institutions already play major roles in international neutrino experiments, including IceCube, Borexino, and JUNO. In recent years, German scientists have shown interest in joining DUNE, and the DESY laboratory in Hamburg will host an international workshop on contributions to the DUNE near detector, to be installed at Fermilab, in October.

“Mainz University has a great tradition in particle physics,” said Fermilab Director Nigel Lockyer. “We are very pleased to have a joint faculty position that will seed stronger ties between our institutions.”

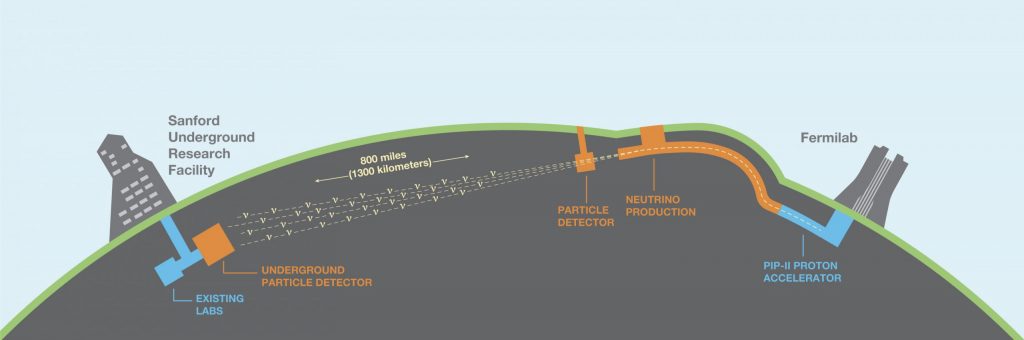

DUNE will send neutrino and antineutrino beams 1,300 kilometers straight through the earth to find out whether neutrinos might be responsible for the dominance of matter over antimatter in our universe. The beams originate at the Fermilab particle accelerator complex near Chicago and will travel through dirt and rock — no tunnel needed — to the enormous particle detectors located 1.5 kilometers underground at the Sanford Underground Research Facility. Prep work is underway for the excavation of about 800,000 tons of rock to create the huge caverns for the DUNE far detectors.

Two “small” prototype detectors, each about the size of a three-story house, have been built at the European research center CERN, and the construction of components for the four full-size far detectors, each 20 times larger than one of the prototype detectors, will begin next year.

“We hope that the Mainz group will contribute their innovative detector concept to the DUNE near detector, which will allow us to analyze the neutrino beam at Fermilab before it goes through the earth,” said DUNE spokesperson Stefan Söldner-Rembold, University of Manchester, UK. “This is crucial for understanding the data the neutrinos produce when they arrive in South Dakota.”

The newly recruited neutrino scientist will be based in Mainz and become a member of the PRISMA+ Cluster of Excellence for precision physics, fundamental interactions and structure of matter. The appointed scientist also will have the opportunity for extended research stays at Fermilab.

How did you end up at Fermilab?

I’m from Georgia — a beautiful country in Eastern Europe, in the Caucasus Mountains. My husband, Guram Chlachidze, who also works at Fermilab, and I moved to the U.S. about 18 years ago. We were particle physicists, and my husband was invited as a guest scientist at Fermilab.

It was a difficult time in Georgia. It was the time after the Soviet Union was broken up, and the country was fighting for its independence. So, for us to receive this invitation for Guram to work at Fermilab was just super exciting.

What was it like when you arrived at Fermilab?

When we arrived in the U.S., my husband started working at Fermilab, and our daughter was born quite soon after, within a few months. I put my career on hold and adjusted to my new life.

I was a new mom in a new country, everything new. I spent about three years as a stay-at-home mom. Even though it was so challenging and so difficult, the people around were just super nice, and this was what gave me strength to stay in the U.S. and start our new life here.

We are citizens now. Even though we visit Georgia every year in the summer to see our parents, family and friends, we feel at home here. Fermilab is our home, and the U.S. is our country.

Ketevan Akhobadze stands beside exhibits that explain concepts behind particle accelerator technology.

What is your role at Fermilab?

I am in charge of Lederman Science Center exhibit development, upgrade, operations and maintenance. These exhibits are about particle physics, developed for middle school students and the general public.

The challenge and excitement of this job is that you get to create a hands-on activity that communicates the complicated ideas of particle physics and makes them accessible and understandable for someone without any background in physics.

The work we are doing with these exhibits is trying to make a bridge between Fermilab science and the public — get people excited about particle physics.

Of course, we don’t expect to teach them physics in a few hours. That’s impossible. But we are hopeful that these exhibits will kick-start their interest in Fermilab science and STEM in general. This is what gives me motivation and makes me excited about my job.

What is your favorite part of your job?

Coming up with a new exhibit — brainstorming ideas about it and putting it together in my mind. Then I share it with the exhibit committee, and I get my colleagues’ feedback on it. We put together a prototype and, if everything works well, we build a new exhibit.

In the last five years, we’ve built and upgraded many exhibits; one of them is about gravitational lensing. I came up with this idea, and my friends liked it, so we put together a prototype. Then, I literally built it in my garage.

Currently, I’m working on a new exhibit about neutrino mass. This is a very challenging exhibit to put together because we know very little about neutrino masses — we only know upper limits for them.

Scientists don’t know at this point exactly what the three neutrino masses are. The exhibit will introduce our visitors to the mass scale of subatomic particles — where different particles fit on that scale and how Fermilab scientists use underground neutrino experiments to explore the neutrino mass mystery.

What do you like to do when you’re not at work?

Traveling is probably most fun for me beyond my work. We go to Georgia to see our family, and we usually stop somewhere in Europe to see places. This is something that really makes me happy.

I love remodeling at home, I change colors, relocate furniture; my husband doesn’t like this, but I do it anyway. I also love cooking and baking for my family.