Para una versión en español, haga clic aquí. Para a versão em português, clique aqui.

Charged particles, like protons and electrons, can be characterized by the trails of atoms these particles ionize. In contrast, neutrinos and their antiparticle partners almost never ionize atoms, so their interactions have to be pieced together by how they break nuclei apart.

But when the breakup produces a neutron, it can silently carry away a critical piece of information: some of the antineutrino’s energy.

Fermilab’s MINERvA collaboration recently published a paper to quantify the neutrons produced by antineutrinos interacting on a plastic target.

The way antineutrinos change between their various types could help explain why the modern universe is dominated by matter. The most promising model of how this behavior relates particles and antiparticles depends on antineutrino energy. However, neutrons can leave holes in the puzzle of an antineutrino’s identity because they carry away energy and are produced in different quantities by neutrinos and antineutrinos. This MINERvA result is aimed at improving predictions of how neutrons could affect current and future neutrino experiments, including the international Deep Underground Neutrino Experiment, hosted by Fermilab.

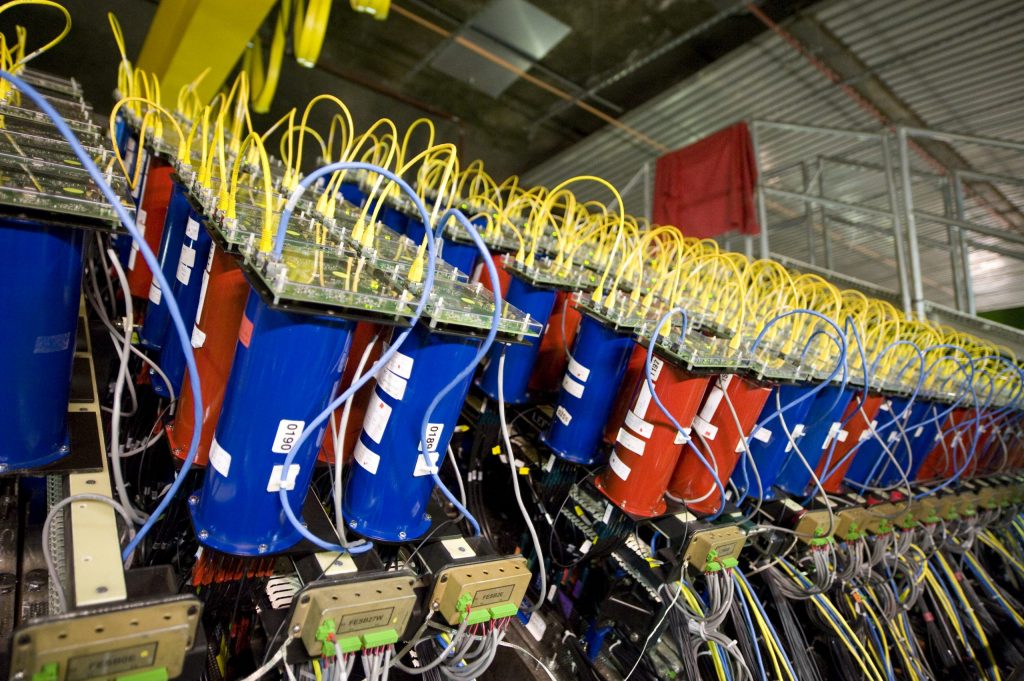

The MINERvA detector at Fermilab helps scientists analyze neutrino interactions with atomic nuclei. Photo: Reidar Hahn

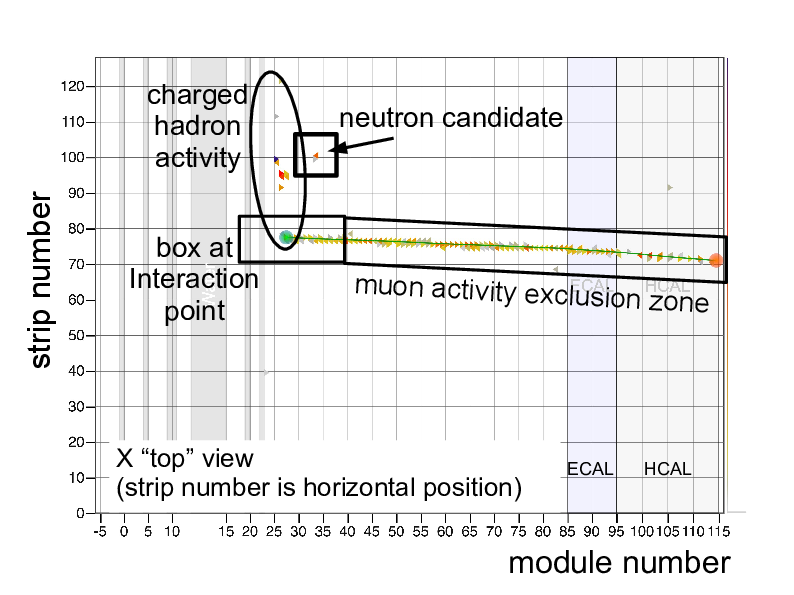

In this study, MINERvA looked for antineutrino interactions that produce neutrons. The antineutrino interactions that MINERvA studies look like one or more trails of ionized atoms all pointing back to a single nucleus. Unlike charged particles, neutrons can travel many tens of centimeters from an antineutrino interaction before being detected. So, the MINERvA collaboration characterized neutron activity as pockets of ionized atoms spatially isolated from both charged particle tracks and the interaction point.

An antineutrino interaction can produce other types of neutral particles, which can fake a neutron interaction, and charged particles, which can confuse a neutron counting measurement by themselves ejecting neutrons from nuclei. In addition, when these charged particles have low momentum, they can end up in a mass of ionization too close to the interaction point to be counted separately that also masks evidence for neutral particles. So, neutrons can be counted more accurately in antineutrino interactions that produce few additional particles. MINERvA scientists used conservation of momentum calculations to avoid interactions that produced many charged particles.

This graphic illustrates a neutrino interaction in the MINERvA detector. The rectangular box highlights the spot where a neutrino interacted inside the detector. The square box just above it highlights the appearance of a neutron resulting from the neutrino interaction. Image: MINERvA

Other experiments’ measurements of neutrons from antineutrinos have waited for each neutron to lose most of its energy before it can be counted. However, neutrons from MINERvA’s antineutrino sample have enough energy to knock other neutrons out of nuclei they collide with. This chain reaction changes both the original neutrons’ energies and the number of neutrons detected. This result focuses on signs of neutrons within tens of nanoseconds of an antineutrino interaction.

By understanding neutron production in concert with MINERvA’s characterization of antineutrino interactions on many nuclei, future oscillation studies can quantify how undetected neutrons could affect their conclusions about the differences between neutrinos and antineutrinos.

Andrew Olivier is a physicist at the University of Rochester and member of the MINERvA collaboration.

Why is our universe accelerating in its expansion? If Einstein’s theory of general relativity is correct, then the dark energy that drives this expansion accounts for nearly 70% of the total energy in the universe. However, precise measurements of the history of this expansion may reveal that new dynamic forces are in play. The Dark Energy Survey has combined its four primary cosmological probes for the first time in order to constrain the properties of dark energy. These first combined constraints are competitive with previous experiments and will improve as more data is analyzed.

The Dark Energy Survey is the first experiment to demonstrate the immense power and promise of this combined-probes approach to survey design. The combined-probes approach is the basis for all major next-generation dark energy experiments in the 2020s including the Large Synoptic Survey Telescope. It enables scientists to make the most precise measurement of dark energy possible while protecting against measurement bias.

Researchers used the Blanco telescope in conducting the Dark Energy Survey. The Milky Way is on the left of the sky, with the Magellanic clouds in the center. Photo: Reidar Hahn

Dark energy is the mysterious phenomenon that is accelerating the universe’s expansion. To get a firmer grasp on dark energy’s nature, scientists take various measurements of celestial objects, analyzing the data to determine how dark energy affects the growth of our universe.

Researchers model dark energy with an equation of state. This is related to the rate at which the universe grows over time. That this equation of state is constant in time (with a value of -1) is the prediction of a cosmological constant in Einstein’s field equations in general relativity.

For the first time, the Dark Energy Survey has combined four approaches to inform the dark energy equation of state. The four approaches measure the distances to the explosions of dying stars called supernovae, the regular variations in the density of galaxies called baryon acoustic oscillations, the way galaxies cluster together, and the way light from distant galaxies is distorted (lensed) by structure in the universe. This combination is one of the most powerful measurements ever made by a dark energy experiment. This combined result agrees with the result obtained by combining many previous cosmological data sets: that the dark energy equation of state appears consistent with a cosmological constant. The Dark Energy Survey also demonstrates for the first time that researchers can use similar surveys to independently constrain the amount of ordinary matter in the universe, an important check against measurements from the primordial universe nearly 14 billion years ago.

Arguably, the most important aspect of this measurement is that it is the first time scientists have confirmed this result to such precision in an analysis that was protected against observer bias. This is important because the Dark Energy Survey is now making the most precise measurements of dark energy ever, and when the standard cosmological model and all previous evidence suggests a cosmological constant explanation for dark energy, researchers must do everything they can to limit the possibility of unconscious biases in their analyses.

Michael Troxel is a Duke University physicist on the Dark Energy Survey.