Fermilab researchers supercharge neural networks, boosting potential of AI to revolutionize particle physics

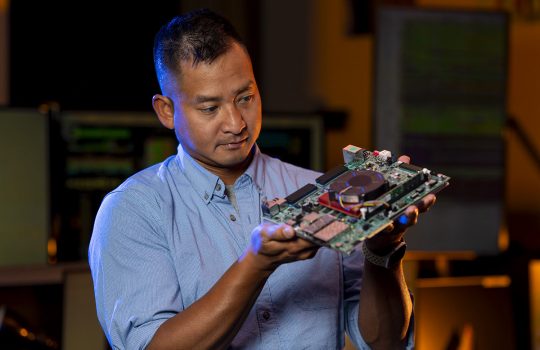

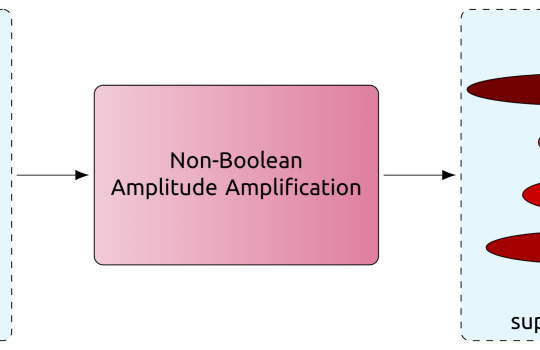

Fermilab researchers have provided expertise and leadership in developing an open-source framework that enables the design of hardware capable of making split-second decisions. These advances aim to prioritize the enormous volumes of data produced by some of humanity’s most ambitious physics experiments.