Fermilab drives progress for national AI Genesis Mission

- AI

- artificial intelligence

- astrophysics

- computing

- emerging technologies

- microelectronics

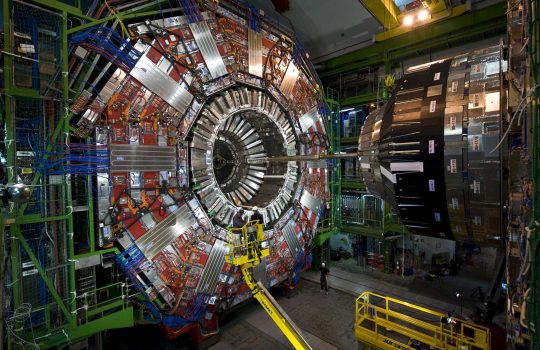

- particle accelerator

- particle physics

- theory

The Genesis Mission is leveraging the strength of the U.S. Department of Energy’s 17 national laboratories, including Fermilab, alongside American research universities and industry partners. The collaborative effort aims to supercharge innovation by integrating the transformative power of artificial intelligence across the national research landscape.